In today’s technology-driven landscape, integrations are the backbone of modern organizations, spotlighting the critical role of integration specialists. But what exactly does this role encompass? As businesses increasingly adopt cloud-based applications while maintaining legacy systems, the demand for seamless connectivity has never been greater. Modern apps rely heavily on APIs, ERP systems demand flawless integration, and uninterrupted data flow across platforms is essential. These complexities present a significant challenge: ensuring reliable and efficient communication between diverse systems.

The Essential Role of Integration Specialists

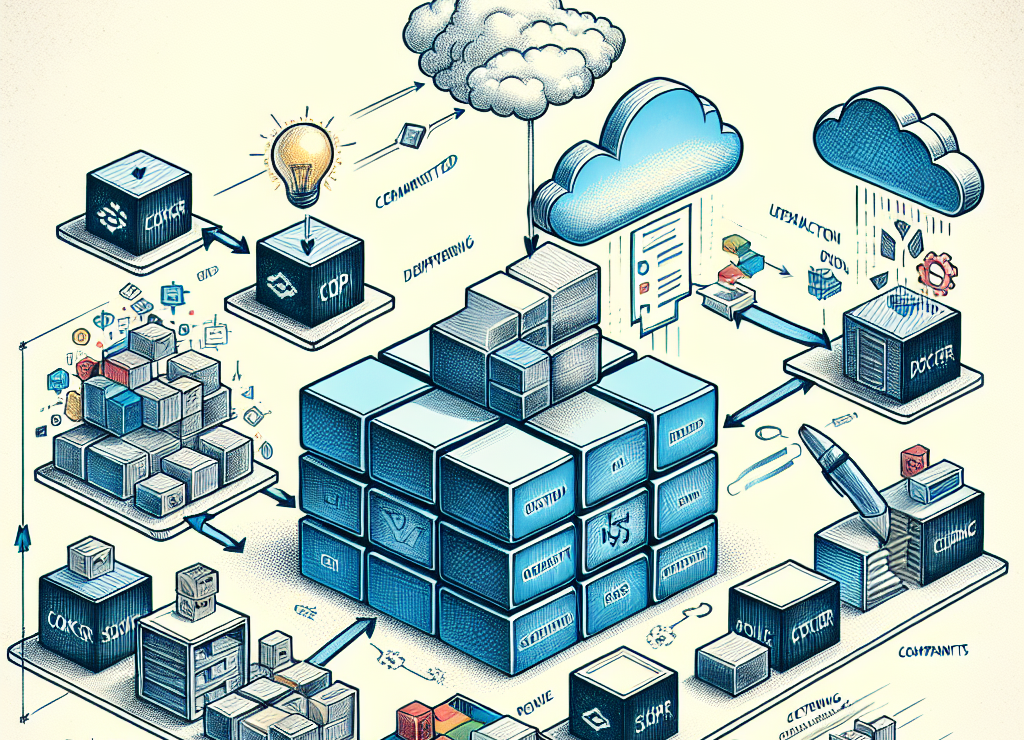

As technology evolves, the demand for skilled integration specialists has grown rapidly. These professionals excel in critical areas such as legacy system migration, cloud-native development, and data management. A key focus lies in optimizing data to enable seamless integration between existing systems and emerging technologies. With expertise in APIs, application packaging, and containerization, they design and implement solutions that bridge the gaps between diverse platforms. By creating and maintaining robust integration frameworks, they ensure smooth data flow and foster collaboration across an organization’s technology ecosystem. Through their efforts, integration specialists drive efficiency, spark innovation, and pave the way for sustainable growth.

Collaboration at the Heart of Integration

Integration specialists are more than technical experts—they are essential collaborators. Acting as a bridge between IT, operations, and business units, they work closely with teams to understand their specific workflows, challenges, and goals. This collaborative approach ensures the integration process is tailored, efficient, and aligned with organizational needs. By leveraging their skills in data mapping, transformation, and API development, they create robust, scalable solutions that facilitate seamless communication between disparate systems.

Integration specialists must also excel in project management. They skillfully coordinate efforts across departments, manage timelines and budgets, and deliver regular progress updates. By addressing challenges quickly and efficiently, they keep implementations on track. Their expertise in handling complex projects ensures integrations are completed on schedule and aligned with overarching business goals.

One of their standout strengths is customization. Integration specialists design tailored solutions that fit an organization’s unique workflows and processes. By aligning integrations with specific business needs, they help unlock greater efficiency, boost productivity, and enhance overall effectiveness.

Role of an Integration Specialist

In a world where digital ecosystems grow more intricate by the day, integration specialists play a pivotal role in ensuring businesses stay connected, competitive, and poised for innovation. Their expertise in creating seamless integrations is not just a technical asset—it’s a strategic advantage.

Specifically, integration specialists perform:

- Data mapping: Integration specialists are responsible for the mapping of data fields between systems, ensuring that information is accurately transferred from one system to another. This involves understanding the structure and format of data in each system, as well as any necessary transformations.

- API development: APIs (Application Programming Interfaces) allow different software systems to communicate with each other. Integration specialists design and develop APIs that enable seamless communication between various systems, streamlining processes and improving efficiency.

- System testing: Before integration can be considered successful, it must be thoroughly tested to ensure that all data is properly transferred, and no errors occur. Integration specialists perform rigorous testing to identify any potential issues and make necessary adjustments.

- Troubleshooting: Integration specialists are responsible for troubleshooting any issues that arise during the integration process. This requires strong problem-solving skills and a deep understanding of the systems being integrated.

- Documentation: In addition to designing and developing APIs, integration specialists also document their work to ensure that others can easily understand and maintain the integration in the future. This documentation includes details on how the systems communicate, potential issues, and troubleshooting steps.

- Continuous improvement: As technology advances and business needs change, integration specialists must constantly review and improve upon existing integrations. They stay up-to-date on new technologies and tools that can enhance integration processes and make recommendations for improvements.

Furthermore, integration specialists can offer insights and recommendations on best practices for integrating systems, drawing from their experience working with various organizations in different industries. This can help businesses make informed decisions when it comes to implementing integrations and avoid common pitfalls or mistakes.

Skills to be an Integration Specialist

We’ve touched on the role of an integration specialist and the skills they bring to the table. But what exactly does someone need to know, and what kind of training is required to become one?

To be an effective integration specialist, one must have a combination of technical and soft skills. This includes:

- Technical Skills: A strong understanding of coding and programming languages, API development, database management, and data mapping techniques are essential for an integration specialist. They should also be familiar with various system integrations tools and platforms.

- Business Acumen: Integration specialists need to understand the business operations and objectives of the organizations they work with. This helps them identify key areas where integrations can add value and improve processes.

- Problem-Solving Abilities: Integrating systems often involves complex challenges that require creative solutions. An integration specialist needs to have strong problem-solving skills to troubleshoot issues, identify root causes, and find effective solutions.

- Attention to Detail: With multiple systems involved, even small errors can have significant consequences. Integration specialists must pay close attention to detail and carefully test each integration to ensure accuracy and efficiency.

- Time Management: Integrating systems can be a time-consuming process that requires coordination with different teams and stakeholders. Integration specialists need to manage their time effectively to meet project deadlines and deliver high-quality results.

- Communication Skills: As an integration specialist, you will work with various teams including developers, IT professionals, and business users. Strong communication skills are essential for explaining technical concepts, discussing requirements, and collaborating with others effectively.

- Continuous Learning: Technology is constantly evolving, and as an integration specialist, you need to keep up with the latest trends and best practices. This requires a passion for continuous learning and willingness to explore new tools and techniques. In today’s fast-paced world, it is crucial to stay updated and adapt to changing technologies in order to deliver efficient solutions.

- Project Management: Integrating systems often involves working on complex projects with multiple stakeholders and tight deadlines. Therefore, having project management skills can greatly benefit an integration specialist. These skills include time management, organization, task prioritization, and resource allocation. Utilizing project management tools and methodologies such as Agile or Scrum can help ensure successful integration projects.

This dynamic and evolving role is perfectly suited for highly experienced application and system architects. While expertise is essential, the position requires a unique blend of technical proficiency and strong interpersonal skills—a combination that can be challenging to find. However, with the increasing demand for integration specialists, organizations are investing in training and development programs to cultivate talent within their own teams.

Conclusion

In conclusion, the role of an integration specialist is becoming increasingly critical in today’s tech-driven world. With the growing need for organizations to seamlessly connect their various systems and applications, these specialists play a key role in ensuring smooth and efficient operations. With a unique blend of technical expertise and strong soft skills, they are able to bridge gaps between different technologies and teams, enabling organizations to achieve their digital transformation goals.

As the demand for integration specialists continues to rise, it presents a great opportunity for those with relevant skills and experience. Whether you’re an experienced application or system architect looking to transition into this role or a fresh graduate interested in pursuing a career in integration, there are ample opportunities available across industries.

Click here for a post on application programming interfaces (API’s).